java

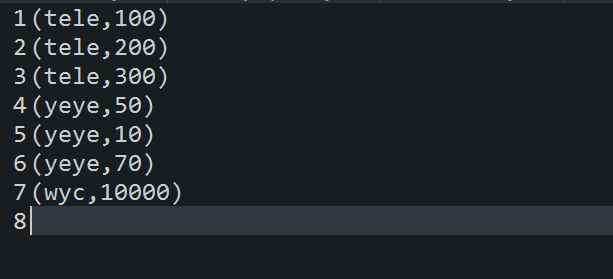

1 /** 2 *saveastextfile 把rdd中的数据保存到文件中,只能指定文件夹 3 *@author Tele 4 * 5 */ 6 public class SaveasTextFileDemo1 { 7 private static SparkConf conf = new SparkConf().setMaster("local").setAppName("saveastextfiledemo1"); 8 private static JavaSparkContext jsc = new JavaSparkContext(conf); 9 10 public static void main(String[] args) {11 List > list = Arrays.asList(12 new Tuple2 ("tele",100),13 new Tuple2 ("tele",200),14 new Tuple2 ("tele",300),15 new Tuple2 ("yeye",50),16 new Tuple2 ("yeye",10),17 new Tuple2 ("yeye",70),18 new Tuple2 ("wyc",10000)19 );20 21 JavaPairRDD rdd = jsc.parallelizePairs(list);22 23 //保存到本地24 rdd.saveAsTextFile("./src/main/resources/local");25 jsc.close();26 } 27 }

scala

1 object SaveasTextFileDemo { 2 def main(args: Array[String]): Unit = { 3 val conf = new SparkConf().setMaster("local").setAppName("saveastextfiledemo"); 4 val sc = new SparkContext(conf); 5 6 val arr = Array(("class1","tele"),("class1","yeye"),("class2","wyc")); 7 8 val rdd = sc.parallelize(arr,1); 9 10 rdd.saveAsTextFile("./src/main/resources/myfile")11 12 }13 }