python(二十六)——爬虫练习(爬取图片,爬取QQ号)

发布日期:2021-06-30 16:35:25

浏览次数:2

分类:技术文章

本文共 3595 字,大约阅读时间需要 11 分钟。

目录

练习:从网上爬取图片到本地

图片来自1号店

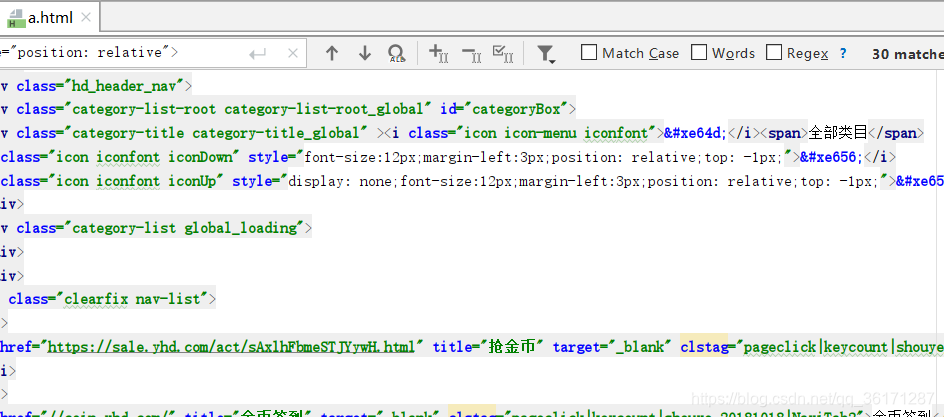

可以先将一号店的网页代码爬取到一个HTML中

import urllib.requestimport osimport redef imageCrawler(url,topath): headers = { 'User-Agent': 'Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/39.0.2171.95 Safari/537.36 OPR/26.0.1656.60' } req = urllib.request.Request(url, headers=headers) response = urllib.request.urlopen(req) html = response.read() with open(r'a.html','wb') as f: f.write(html)url = 'https://search.yhd.com/c0-0/k%25E6%2597%25B6%25E5%25B0%259A%25E8%25A3%2599%25E8%25A3%2585/'toPath = r'C:\Users\asus\Desktop\img'imageCrawler(url,toPath) a.html

在网页中找到每张图片的img src来源

然后利用编码去获取(.*?)里的图片地址,通过urllib.request.urlretrieve把图片下载到本地存储

最终代码:

import osimport redef imageCrawler(url,topath): headers = { 'User-Agent': 'Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/39.0.2171.95 Safari/537.36 OPR/26.0.1656.60' } req = urllib.request.Request(url, headers=headers) response = urllib.request.urlopen(req) html = response.read().decode('utf-8') # with open(r'a.html','wb') as f: # f.write(html) # pat = r' (.*?) ' pat = r' \n ) ' re_image = re.compile(pat,re.S) imagelist = re_image.findall(html) print(imagelist) print(len(imagelist)) # print(imagelist[0]) num = 1 for imageurl in imagelist: path = os.path.join(topath,str(num)+'.jpg') num += 1 #把图片下载到本地存储 urllib.request.urlretrieve('http://'+imageurl,filename=path)url = 'https://search.yhd.com/c0-0/k%25E6%2597%25B6%25E5%25B0%259A%25E8%25A3%2599%25E8%25A3%2585/'toPath = r'C:\Users\asus\Desktop\img'imageCrawler(url,toPath)

' re_image = re.compile(pat,re.S) imagelist = re_image.findall(html) print(imagelist) print(len(imagelist)) # print(imagelist[0]) num = 1 for imageurl in imagelist: path = os.path.join(topath,str(num)+'.jpg') num += 1 #把图片下载到本地存储 urllib.request.urlretrieve('http://'+imageurl,filename=path)url = 'https://search.yhd.com/c0-0/k%25E6%2597%25B6%25E5%25B0%259A%25E8%25A3%2599%25E8%25A3%2585/'toPath = r'C:\Users\asus\Desktop\img'imageCrawler(url,toPath) 运行结果:

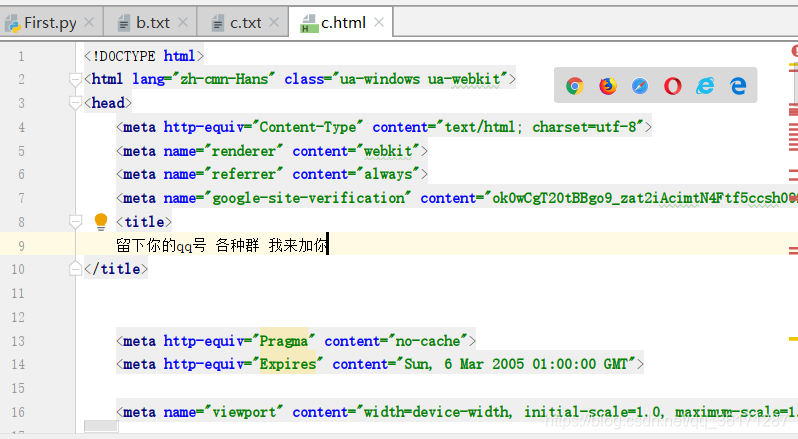

练习:爬取网络中的QQ号

从豆瓣的这个页面中爬取QQ号

代码:

import urllib.requestimport osimport reimport sslfrom collections import dequedef writeFile1(htmlBytes,topath): with open(topath,'wb') as f: f.write(htmlBytes)def writeFileStr(htmlBytes,topath): with open(topath,'wb') as f: f.write(htmlBytes)def gethtmlbytes(url): headers = { 'User-Agent': 'Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/39.0.2171.95 Safari/537.36 OPR/26.0.1656.60' } req = urllib.request.Request(url, headers=headers) context = ssl._create_unverified_context() response = urllib.request.urlopen(req,context=context) return response.read()def qqCrawler(url,topath): htmlbytes = gethtmlbytes(url) # writeFile1(htmlbytes,r'c.html') # writeFileStr(htmlbytes,r'c.txt') htmlStr = str(htmlbytes) #爬取QQ号 pat = r'[1-9]\d{4,9}' re_qq = re.compile(pat) qqlist = re_qq.findall(htmlStr) #QQ号列表去重 qqlist = list(set(qqlist)) #将爬到的QQ号写入txt f = open(topath,'a') for qqstr in qqlist: f.write(qqstr+'\n') f.close() # print(qqlist) # print(len(qqlist)) #爬取里面的一些网址 pat = '(((http|ftp|https)://)(([a-zA-Z0-9\._-]+\.[a-zA-Z]{2,6})|([0-9]{1,3}\.[0-9]{1,3}\.[0-9]{1,3}))(:[0-9]{1,4})*(/[a-zA-Z0-9\&%_\./-~-]*)?)' re_url = re.compile(pat) urllist = re_url.findall(htmlStr) # QQ号列表去重 urllist = list(set(urllist)) # print(urllist) # print(len(urllist)) # print(urllist[10]) return urllisturl = 'https://www.douban.com/group/topic/110094603/'topath = r'b.txt'# qqCrawler(url,topath)#设置中央控制器def center(url,topath): queue = deque() queue.append(url) while len(queue) != 0: targetUrl = queue.popleft() urllist = qqCrawler(targetUrl,topath) for item in urllist: tempurl = item[0] queue.append(tempurl)center(url,topath) 运行结果:可以爬很久,直接停止了

一起学习,一起进步 -.- ,如有错误,可以发评论

转载地址:https://kongchengji.blog.csdn.net/article/details/95628934 如侵犯您的版权,请留言回复原文章的地址,我们会给您删除此文章,给您带来不便请您谅解!

发表评论

最新留言

做的很好,不错不错

[***.243.131.199]2024年04月21日 11时11分12秒

关于作者

喝酒易醉,品茶养心,人生如梦,品茶悟道,何以解忧?唯有杜康!

-- 愿君每日到此一游!

推荐文章

location区段

2019-04-30

nginx访问控制、基于用户认证、https配置

2019-04-30

用zabbix监控nginx

2019-04-30

rewrite和if语句

2019-04-30

nginx实现负载均衡和动静分离

2019-04-30

SaltStack

2019-04-30

Packer 如何将 JSON 的配置升级为 HCL2

2019-04-30

Ubuntu 安装 NTP 服务

2019-04-30

NeoFetch - Linux 使用命令行查看系统信息

2019-04-30

Jenkins 控制台输出中的奇怪字符

2019-04-30

Linux添加系统调用

2019-04-30

ubuntu 18 CTF 环境搭建

2019-04-30

linux内存的寻址方式

2019-04-30

[off by null + tcache dup]lctf_easy_heap

2019-04-30

[pie+libc]national2021_pwny

2019-04-30

task_struct 结构分析

2019-04-30

Linux创建进程的源码分析

2019-04-30

ubunut16.04的pip3出现问题,重新安装pip3

2019-04-30