本文共 9439 字,大约阅读时间需要 31 分钟。

文章目录

一、前言

今天是第10天,我们将使用LSTM完成股票开盘价格的预测,最后的R2可达到0.74,相对传统的RNN的0.72提高了两个百分点。

我的环境:

- 语言环境:Python3.6.5

- 编译器:jupyter notebook

- 深度学习环境:TensorFlow2.4.1

来自专栏:

往期精彩内容:

转载请通过左侧联系方式(电脑端可看)联系我,备注:CSDN转载

二、LSTM的是什么

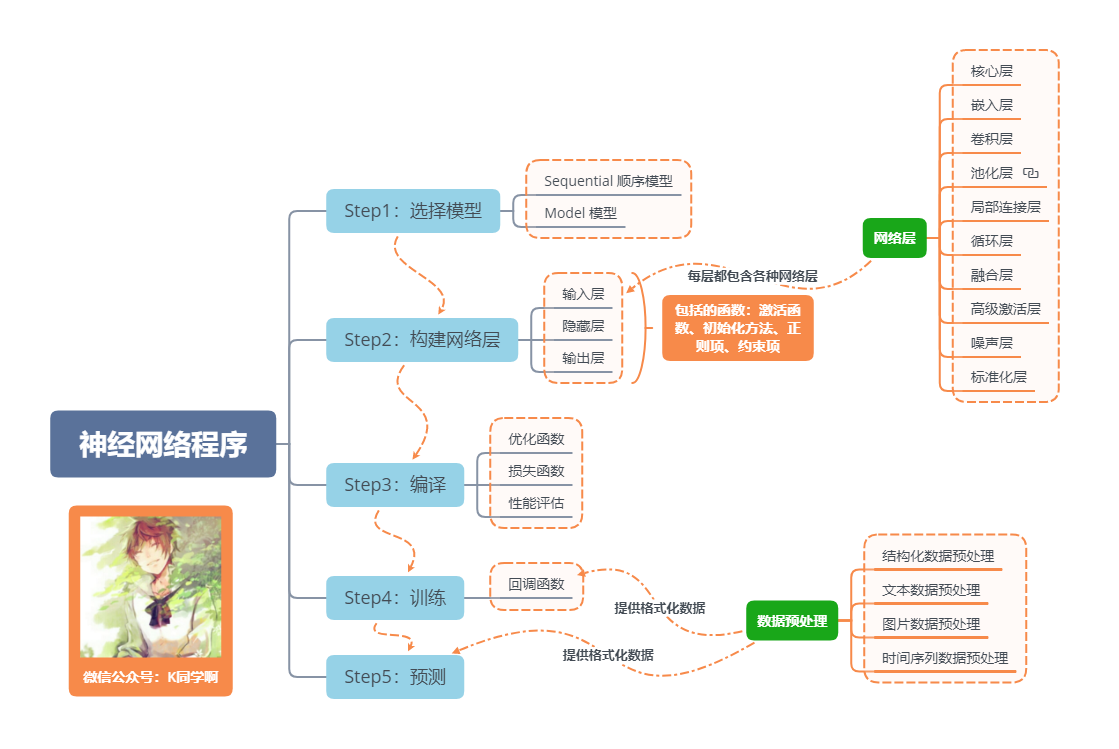

神经网络程序的基本流程

一句话介绍LSTM,它是RNN的进阶版,如果说RNN的最大限度是理解一句话,那么LSTM的最大限度则是理解一段话,详细介绍如下:

LSTM,全称为长短期记忆网络(Long Short Term Memory networks),是一种特殊的RNN,能够学习到长期依赖关系。LSTM由Hochreiter & Schmidhuber (1997)提出,许多研究者进行了一系列的工作对其改进并使之发扬光大。LSTM在许多问题上效果非常好,现在被广泛使用。

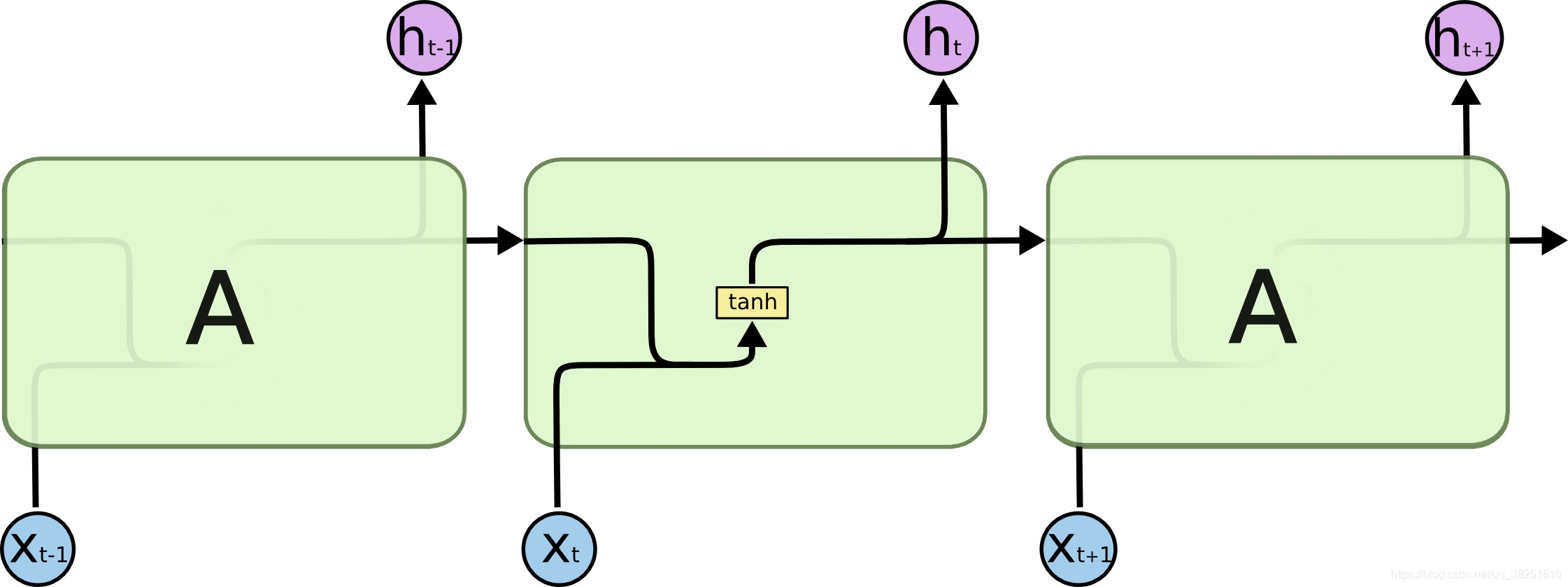

所有的循环神经网络都有着重复的神经网络模块形成链的形式。在普通的RNN中,重复模块结构非常简单,其结构如下:

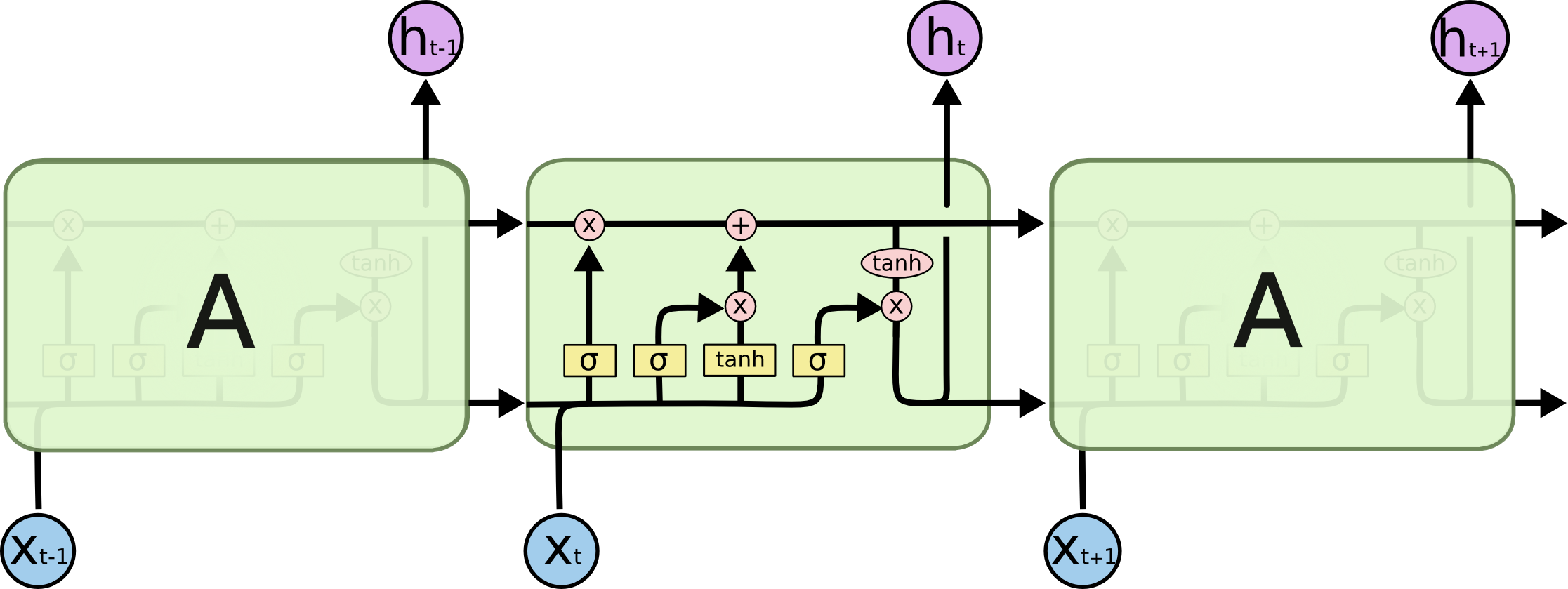

LSTM避免了长期依赖的问题。可以记住长期信息!LSTM内部有较为复杂的结构。能通过门控状态来选择调整传输的信息,记住需要长时间记忆的信息,忘记不重要的信息,其结构如下:

三、准备工作

1.设置GPU

如果使用的是CPU可以注释掉这部分的代码。

import tensorflow as tfgpus = tf.config.list_physical_devices("GPU")if gpus: tf.config.experimental.set_memory_growth(gpus[0], True) #设置GPU显存用量按需使用 tf.config.set_visible_devices([gpus[0]],"GPU") 2.设置相关参数

import pandas as pdimport tensorflow as tf import numpy as npimport matplotlib.pyplot as plt# 支持中文plt.rcParams['font.sans-serif'] = ['SimHei'] # 用来正常显示中文标签plt.rcParams['axes.unicode_minus'] = False # 用来正常显示负号from numpy import arrayfrom sklearn import metricsfrom sklearn.preprocessing import MinMaxScalerfrom keras.models import Sequentialfrom keras.layers import Dense,LSTM,Bidirectional# 确保结果尽可能重现from numpy.random import seedseed(1)tf.random.set_seed(1)# 设置相关参数n_timestamp = 40 # 时间戳n_epochs = 20 # 训练轮数# ====================================# 选择模型:# 1: 单层 LSTM# 2: 多层 LSTM# 3: 双向 LSTM# ====================================model_type = 1

3.加载数据

data = pd.read_csv('./datasets/SH600519.csv') # 读取股票文件data | Unnamed: 0 | date | open | close | high | low | volume | code | |

|---|---|---|---|---|---|---|---|---|

| 0 | 74 | 2010-04-26 | 88.702 | 87.381 | 89.072 | 87.362 | 107036.13 | 600519 |

| 1 | 75 | 2010-04-27 | 87.355 | 84.841 | 87.355 | 84.681 | 58234.48 | 600519 |

| 2 | 76 | 2010-04-28 | 84.235 | 84.318 | 85.128 | 83.597 | 26287.43 | 600519 |

| 3 | 77 | 2010-04-29 | 84.592 | 85.671 | 86.315 | 84.592 | 34501.20 | 600519 |

| 4 | 78 | 2010-04-30 | 83.871 | 82.340 | 83.871 | 81.523 | 85566.70 | 600519 |

| ... | ... | ... | ... | ... | ... | ... | ... | ... |

| 2421 | 2495 | 2020-04-20 | 1221.000 | 1227.300 | 1231.500 | 1216.800 | 24239.00 | 600519 |

| 2422 | 2496 | 2020-04-21 | 1221.020 | 1200.000 | 1223.990 | 1193.000 | 29224.00 | 600519 |

| 2423 | 2497 | 2020-04-22 | 1206.000 | 1244.500 | 1249.500 | 1202.220 | 44035.00 | 600519 |

| 2424 | 2498 | 2020-04-23 | 1250.000 | 1252.260 | 1265.680 | 1247.770 | 26899.00 | 600519 |

| 2425 | 2499 | 2020-04-24 | 1248.000 | 1250.560 | 1259.890 | 1235.180 | 19122.00 | 600519 |

2426 rows × 8 columns

"""前(2426-300=2126)天的开盘价作为训练集,后300天的开盘价作为测试集"""training_set = data.iloc[0:2426 - 300, 2:3].values test_set = data.iloc[2426 - 300:, 2:3].values

四、数据预处理

1.归一化

#将数据归一化,范围是0到1sc = MinMaxScaler(feature_range=(0, 1))training_set_scaled = sc.fit_transform(training_set)testing_set_scaled = sc.transform(test_set)

2.时间戳函数

# 取前 n_timestamp 天的数据为 X;n_timestamp+1天数据为 Y。def data_split(sequence, n_timestamp): X = [] y = [] for i in range(len(sequence)): end_ix = i + n_timestamp if end_ix > len(sequence)-1: break seq_x, seq_y = sequence[i:end_ix], sequence[end_ix] X.append(seq_x) y.append(seq_y) return array(X), array(y)X_train, y_train = data_split(training_set_scaled, n_timestamp)X_train = X_train.reshape(X_train.shape[0], X_train.shape[1], 1)X_test, y_test = data_split(testing_set_scaled, n_timestamp)X_test = X_test.reshape(X_test.shape[0], X_test.shape[1], 1)

五、构建模型

# 建构 LSTM模型if model_type == 1: # 单层 LSTM model = Sequential() model.add(LSTM(units=50, activation='relu', input_shape=(X_train.shape[1], 1))) model.add(Dense(units=1))if model_type == 2: # 多层 LSTM model = Sequential() model.add(LSTM(units=50, activation='relu', return_sequences=True, input_shape=(X_train.shape[1], 1))) model.add(LSTM(units=50, activation='relu')) model.add(Dense(1))if model_type == 3: # 双向 LSTM model = Sequential() model.add(Bidirectional(LSTM(50, activation='relu'), input_shape=(X_train.shape[1], 1))) model.add(Dense(1)) model.summary() # 输出模型结构

WARNING:tensorflow:Layer lstm will not use cuDNN kernel since it doesn't meet the cuDNN kernel criteria. It will use generic GPU kernel as fallback when running on GPUModel: "sequential"_________________________________________________________________Layer (type) Output Shape Param # =================================================================lstm (LSTM) (None, 50) 10400 _________________________________________________________________dense (Dense) (None, 1) 51 =================================================================Total params: 10,451Trainable params: 10,451Non-trainable params: 0_________________________________________________________________

六、激活模型

# 该应用只观测loss数值,不观测准确率,所以删去metrics选项,一会在每个epoch迭代显示时只显示loss值model.compile(optimizer=tf.keras.optimizers.Adam(0.001), loss='mean_squared_error') # 损失函数用均方误差

七、训练模型

history = model.fit(X_train, y_train, batch_size=64, epochs=n_epochs, validation_data=(X_test, y_test), validation_freq=1) #测试的epoch间隔数model.summary()

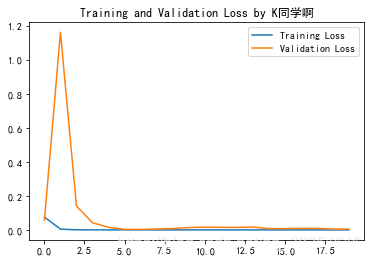

Epoch 1/2033/33 [==============================] - 5s 107ms/step - loss: 0.1049 - val_loss: 0.0569Epoch 2/2033/33 [==============================] - 3s 86ms/step - loss: 0.0074 - val_loss: 1.1616Epoch 3/2033/33 [==============================] - 3s 83ms/step - loss: 0.0012 - val_loss: 0.1408Epoch 4/2033/33 [==============================] - 3s 78ms/step - loss: 5.8758e-04 - val_loss: 0.0421Epoch 5/2033/33 [==============================] - 3s 84ms/step - loss: 5.3411e-04 - val_loss: 0.0159Epoch 6/2033/33 [==============================] - 3s 81ms/step - loss: 3.9690e-04 - val_loss: 0.0034Epoch 7/2033/33 [==============================] - 3s 84ms/step - loss: 4.3521e-04 - val_loss: 0.0032Epoch 8/2033/33 [==============================] - 3s 85ms/step - loss: 3.8233e-04 - val_loss: 0.0059Epoch 9/2033/33 [==============================] - 3s 81ms/step - loss: 3.6539e-04 - val_loss: 0.0082Epoch 10/2033/33 [==============================] - 3s 81ms/step - loss: 3.1790e-04 - val_loss: 0.0141Epoch 11/2033/33 [==============================] - 3s 82ms/step - loss: 3.5332e-04 - val_loss: 0.0166Epoch 12/2033/33 [==============================] - 3s 86ms/step - loss: 3.2684e-04 - val_loss: 0.0155Epoch 13/2033/33 [==============================] - 3s 80ms/step - loss: 2.6495e-04 - val_loss: 0.0149Epoch 14/2033/33 [==============================] - 3s 84ms/step - loss: 3.1398e-04 - val_loss: 0.0172Epoch 15/2033/33 [==============================] - 3s 80ms/step - loss: 3.4533e-04 - val_loss: 0.0077Epoch 16/2033/33 [==============================] - 3s 81ms/step - loss: 2.9621e-04 - val_loss: 0.0082Epoch 17/2033/33 [==============================] - 3s 83ms/step - loss: 2.2228e-04 - val_loss: 0.0092Epoch 18/2033/33 [==============================] - 3s 86ms/step - loss: 2.4517e-04 - val_loss: 0.0093Epoch 19/2033/33 [==============================] - 3s 86ms/step - loss: 2.7179e-04 - val_loss: 0.0053Epoch 20/2033/33 [==============================] - 3s 82ms/step - loss: 2.5923e-04 - val_loss: 0.0054Model: "sequential"_________________________________________________________________Layer (type) Output Shape Param # =================================================================lstm (LSTM) (None, 50) 10400 _________________________________________________________________dense (Dense) (None, 1) 51 =================================================================Total params: 10,451Trainable params: 10,451Non-trainable params: 0_________________________________________________________________

八、结果可视化

1.绘制loss图

plt.plot(history.history['loss'] , label='Training Loss')plt.plot(history.history['val_loss'], label='Validation Loss')plt.title('Training and Validation Loss by K同学啊')plt.legend()plt.show()

2.预测

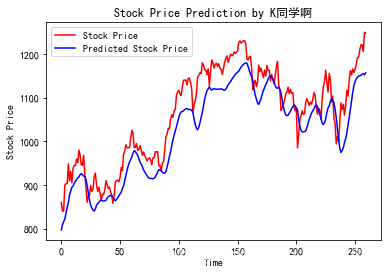

predicted_stock_price = model.predict(X_test) # 测试集输入模型进行预测predicted_stock_price = sc.inverse_transform(predicted_stock_price) # 对预测数据还原---从(0,1)反归一化到原始范围real_stock_price = sc.inverse_transform(y_test)# 对真实数据还原---从(0,1)反归一化到原始范围# 画出真实数据和预测数据的对比曲线plt.plot(real_stock_price, color='red', label='Stock Price')plt.plot(predicted_stock_price, color='blue', label='Predicted Stock Price')plt.title('Stock Price Prediction by K同学啊')plt.xlabel('Time')plt.ylabel('Stock Price')plt.legend()plt.show()

3.评估

"""MSE :均方误差 -----> 预测值减真实值求平方后求均值RMSE :均方根误差 -----> 对均方误差开方MAE :平均绝对误差-----> 预测值减真实值求绝对值后求均值R2 :决定系数,可以简单理解为反映模型拟合优度的重要的统计量详细介绍可以参考文章:https://blog.csdn.net/qq_38251616/article/details/107997435"""MSE = metrics.mean_squared_error(predicted_stock_price, real_stock_price)RMSE = metrics.mean_squared_error(predicted_stock_price, real_stock_price)**0.5MAE = metrics.mean_absolute_error(predicted_stock_price, real_stock_price)R2 = metrics.r2_score(predicted_stock_price, real_stock_price)print('均方误差: %.5f' % MSE)print('均方根误差: %.5f' % RMSE)print('平均绝对误差: %.5f' % MAE)print('R2: %.5f' % R2) 均方误差: 2688.75170均方根误差: 51.85317平均绝对误差: 44.97829R2: 0.74036

拟合度除了更换模型外,还可以通过调整参数来提高,这里主要是介绍LSTM,就不对调参做详细介绍了。

往期精彩内容:

来自专栏:

需要数据的同学可以在评论中留下邮箱

点个关注,给个赞,加个收藏,送我上热搜,谢谢大家拉!

转载地址:https://mtyjkh.blog.csdn.net/article/details/117907074 如侵犯您的版权,请留言回复原文章的地址,我们会给您删除此文章,给您带来不便请您谅解!

发表评论

最新留言

关于作者